Any SU render engines that renders distorted textures?

-

@thomthom said:

So it doesn't seem to be the case. Though, it is weird what Jeff describes - seems to do the trick for him in Indigo. ...maybe Whaat knows something on this...

Very interesting thread. Thanks Thomthom for starting this discussion. So far, it has confirmed most of my theories.

Unless the rendering engine can interpolate UV mapping using four anchor points, it can't be done without exporting a pre-distorted texture.

The method of triangulating the mesh for the spherical mapping probably has something to do with how UVTools works. I believe that for faces with greater than three vertices, I call the

position_materialmethod with four points (which probably results in a very slightly distorted texture, but enough to mess up the rendering engines). For faces with three vertices, I call theposition_materialmethod with only three points so the texture is not distorted (at least not enough to notice).It is strange. Someone should test to see if the textures are distorted even for triangles after applying the spherical mapping. Maybe I'm wrong...

-

@whaat said:

Unless the rendering engine can interpolate UV mapping using four anchor points, it can't be done without exporting a pre-distorted texture.

Is that possible? Is a distorted texture at all possible to define with three points?

@whaat said:

The texturewriter class will write pre-distorted textures. It is a lot of work to implement this in a rendering engine because you have to first determine which faces are distorted and create a unique material for each of them.

And work out new UV data for the material you create.

But this method won't make new materials in the SU file, right?@whaat said:

Very few users even mention it as a serious problem.

It comes up most often when a user downloads a model from the 3DW that has been photomatched and then they try to render it.

Could that not be because people are avoiding them due to this problem?

Also - recently there's been more stir about UV mapping here on the forum. As you saw with your UVTools 0.2 thread.

I've got some UV plugins I'd like to make - I've been looking into it - but since I render in V-Ray I try to avoid distorting textures. -

@whaat said:

SkIndigo has had a workaround option for over a year (well before SketchUp added it as a standard feature). You can right-click on a face and go to 'Explode Distorted Texture'. It does the same thing as 'Make Unique Texture'

I've never been a fan of such workaround - makes the organisation of the model difficult.

-

@thomthom said:

Any insight on how to process distorted textures correctly?

As I recall, we originally tried to figure out that the image was distorted, but in the end we would up having SketchUp save an image for everything, and having SketchUp give us the UVQ coordinates and it finally just worked.

I just looked through the code and couldn't find anything special that we are doing. (Except to let SketchUp save a texture for all textured materials, and to let SketchUp give us the UVQs)

-

@al hart said:

As I recall, we originally tried to figure out that the image was distorted, but in the end we would up having SketchUp save an image for everything, and having SketchUp give us the UVQ coordinates and it finally just worked.

From observation it seems that a texture is distorted when the Q value isn't 1.0. The manual says that the UVHelper ignores the Q value - but that isn't the case in reality. Had an conversation with Jeff ("jeff99") about this: http://forums.sketchucation.com/viewtopic.php?f=180&t=21700&start=30#p191715

Which resulted in this summary which I wrote.

@al hart said:

I just looked through the code and couldn't find anything special that we are doing. (Except to let SketchUp save a texture for all textured materials, and to let SketchUp give us the UVQs)

http://forums.sketchucation.com/viewtopic.php?f=180&t=22719

If you write out unique textures for every face, then don't you have to discard the UV data from SU? Because the unique texture would come out regular, but the one SU refers to with its UV data is distorted. Or am I misunderstanding something. -

@thomthom said:

If you write out unique textures for every face, then don't you have to discard the UV data from SU? Because the unique texture would come out regular, but the one SU refers to with its UV data is distorted. Or am I misunderstanding something.

I am pretty sure that SketchUp gives us the full image, and normal UV values for normal, rotated and skewed images, but gives us UV values of (0,0), (1,0), (1,1), and (0,1) (or something like that) for the distorted image, so that the full distorted image is placed on the face. (It may be because we are (or because we aren't - it is hard to remember just what we did to make it work) using UVhelper to get the UVQ values.

-

I got a plugin that let you inspect the UVQ values of the UVHelper: http://forums.sketchucation.com/viewtopic.php?f=180&t=21472

Using that, for all Normal, Scaled, Squashed and Skewed textures the Q values remains 1.0. But for distorted textures the Q values is not 1.0 - it has to be normalized to get flat UV values. The U and V values are also not 1 and 0's - but I'd think that you would have to override the UV values with 1 and 0's in order to fit the new unique texture over the face. That seem to make sense.

I'm still wondering if this is the only way though. Or maybe the SU way simply isn't compatible with the UV co-ordinates of most other apps. How does other 3D applications map textures? Generally.

-

Also - when an image is made unique - how will bump maps and other layers of the rendered material match up?

-

In my opinion, Whaat makes a pretty good explanation. The uv_helper / texture_writer combo is the key. The texture writer is used to produce the pre-distorted texture and the uv_helper returns UV coordinates that are specific to mapping that texture to the face. These are (potentially) different coordinates from the ones you receive directly from the PolygonMesh. I don't know the code off hand, but I do know that Tomasz has been doing this for quite some time; he may have been the first.

As for detecting when textures are distorted... I agree that you will very often see 'z' or 'q' (UVQ) values that are not 1.0, but I think you can get false negatives that way. Meaning, you can have distorted textures where the Q is not any value but 1. (This is speculation only)

@thomthom said:

If you write out unique textures for every face, then don't you have to discard the UV data from SU? Because the unique texture would come out regular, but the one SU refers to with its UV data is distorted. Or am I misunderstanding something.

I think you've got it dead on. The uv_helper and texture_writer work together; one produces the new uv, one produces the image. This doesn't effect the existing scene in any way. The uv_helper produces UV values that match the pre-distorted image, but they don't change the existing SU model. So the resulting image is unusable in the original model; the image no longer matches up with the original UV. You could replace the existing UV and the existing texture, but I think what you've basically done is recreate the "Make Unique" function.

-

@thomthom said:

I'm still wondering if this is the only way though. Or maybe the SU way simply isn't compatible with the UV co-ordinates of most other apps. How does other 3D applications map textures? Generally.

My suspicion is that it is the only way. I think most applications use the barycentric on triangle method because it's very fast. You can precalculate almost all the information, so for any point within the triangle, it's a simple and fast function to translate 3D point into a UV image coordinate. Is there an app out there that actually use UVQ instead of UV, potentially supporting distorted tex? No idea.

-

thomthom, you asked about how unique textures are made. I don't havethe exact code, but here are a couple places to start:

TextureWriter.write( face, filename, [true - front face / false - back face]) Face.get_UVHelper( front_face_coords, back_face_coords, texture_writer) UVHelper.get_front_UVQ / get_back_UVQI think you can actually use this on every single face, even non-distorted, but you'll likely overwrite the same texture over and over and over, which is slow to say the least. So you'll want some management to track when to write a new image.

-

@unknownuser said:

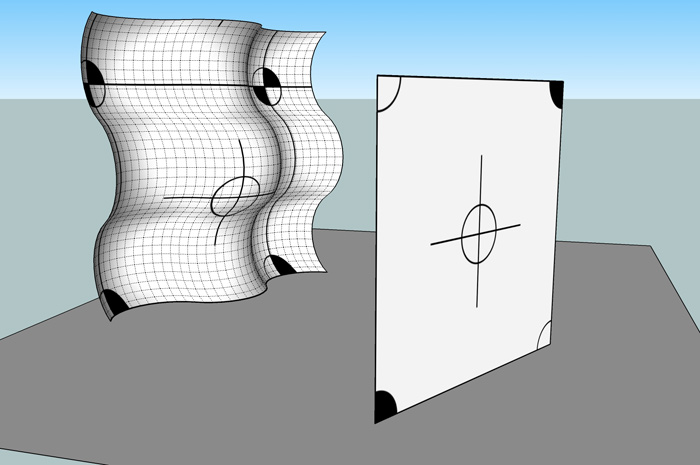

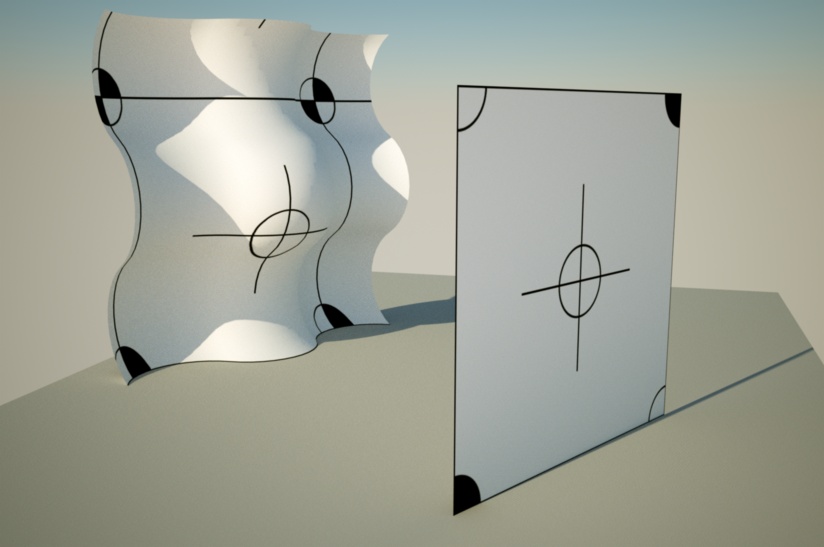

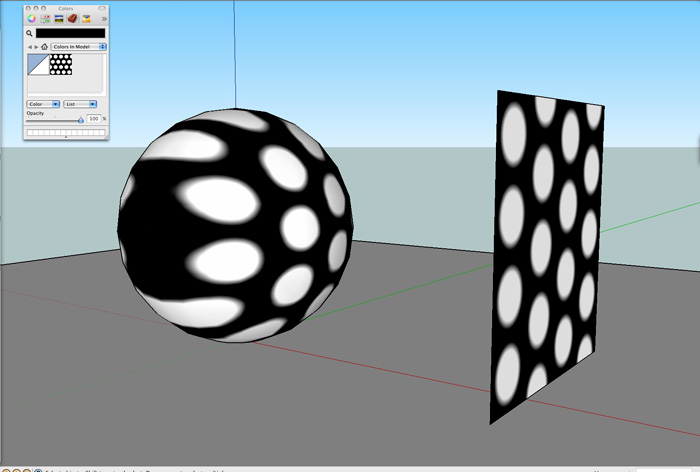

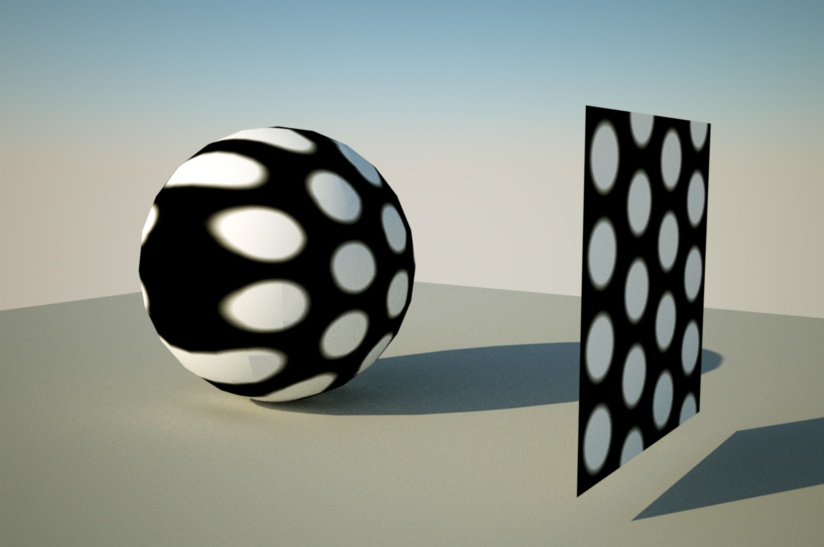

why are these working?

i was under the impression (and i'm almost positive i read this from whaat) that projected textures won't render properly..

are these not distorted?

there's only one texture in the model and no exporting of individual faces happenedIt is quite possible that these images are not actually "distorted". There are four handles which can be used to position textures. (These are explained at the beginning of this thread). Three of them move, stretch, rotate and skew the image. Most normal UV mappings consist of only these four things. Many strange looking mappings are only stretched and skewed. Only the upper right handle "distorts" the image. A distorted image cannot be easily defined by 4 UVQ values at the corners of the face. (Distort is a term we use to mean "even worse then skewed". So many images which seem distorted to us are only stretched and skewed.) (Remember normal UVQ mapping can be used to display most projections of images onto spheres and curved surfaces which we see in most modeling and rendering packages.)

Instead, SketchUp performs a more complicated mapping of the image, saves an already distorted image for us on the disk, and we can them map the image to the face with default UVQ values.

-

why are these working?

i was under the impression (and i'm almost positive i read this from whaat) that projected textures won't render properly..

are these not distorted?

there's only one texture in the model and no exporting of individual faces happened

[i'm pretty sure i project these in the normal sense of projecting a SU texture..

import file as an image.. line it up with the object.. explode the image.. with , hold the cmmd key (mac) and sample the imported texture.. paint on the object i want the image projected onto][EDIT] nvm about what i thought whaat said.. i was thinking about this thread i read a while ago

http://www.indigorenderer.com/forum/viewtopic.php?f=17&t=7036

the dude called it a projected texture but i d/ld the model and it was in fact distorted (as dale said)

[edit2] well, i dunno.. he did say skewed via projection in there

-

Al... I really don't get this or any of your explanations...

What I can see is that your application is also making the texture unique...

Your application and also Hypershot does the same as everyone else...

They just export the texture special for each distorted surface...

-

@al hart said:

[

It is quite possible that these images are not actually "distorted".yeah Al, i think you're right.

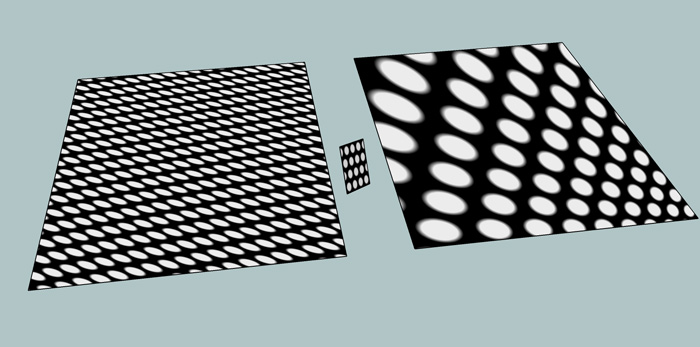

i tried setting up a texture to be projected onto a large flat plane coming in from a weird angle.. the resulting projection should be distorted but instead, it hits then tiles itself...for the attached picture... i projected onto the left surface from the little square in the middle assuming it would give results similar to the square on the right (which is distorted) but it doesn't work out that way..

-

Thea Exporter deals with the sample well.

-

Tomasz - are you sure that the distorted texture isn't being exported as a seperate (unique) texture...??

-

@chris_at_twilight said:

As for detecting when textures are distorted... I agree that you will very often see 'z' or 'q' (UVQ) values that are not 1.0, but I think you can get false negatives that way. Meaning, you can have distorted textures where the Q is not any value but 1. (This is speculation only)

I have never received false negatives by checking the 1.0 with enough precision. The posted TheaExporter sample uses TextureWriter and UVHelper. They work in tandem. The texture writer will create additional ('virtual' unique) texture only when necessary. Sometimes TW can create just a single 'virtual' unique texture for two faces that are parallel to each-other an share same distorted texture.

I have spent many hours to understand how the TW works. There is no point in writing every single face to the TW, because it will take a lot of time. I recognize first if a texture is distorted Q!=1.0.if ((uvq.z.to_f)*100000).round != 100000If it is, it goes to TW, which usually leads to a creation of a unique texture. If it doesn't one have to read the 'handle' returned by the TW. It points to the position of a texture in TW 'stack'.

It would be interesting to learn how SU DEVELOPERS use additional Q coordinate to add perspective distortion to a texture. I have done a search and haven't found any other software using this type of projection. I hope Google staff would share the trick with authors of redering engines so a use of TW, which is creating additional textures == materials in a renderer, won't be necessary.

I have implemented also a method without UVHelper using uv=[uvq.x/uvq.z,uvq.y/uvq.z], but the key is actually to learn how SU applies the projection. As far as I am aware no rendering software uses UVQ.

@frederik said:

Tomasz - are you sure that the distorted texture isn't being exported as a seperate *(unique)*texture...??

The method uses TW which crates 'a second texture'==additional 'Thea material'. I have not found a model so far that gives me wrong projection. Unfortunately the method forces creation of multiple Thea materials.

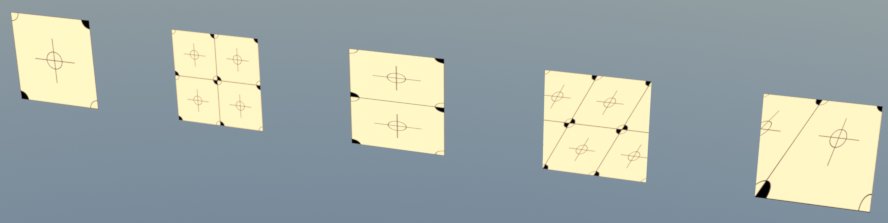

EDIT. I modified Thomas' test model. I have added two additional things - a group with a default mat painted face and a second face with distorted texture which will have same handle in the TextureWriter as the one right bellow.

-

@unknownuser said:

why are these working?

Maybe you curved surface is subdivided into so many smaller faces that deviation isn't visually noticeable?

-

Tomasz: When you discover a distorted texture (from the Q value), and use TW to write out a unique material;

- What UV values do you send to you renderer?

- If the render material has a bumpmap applied to it - how is that handled? Won't you end up with mismatching bump?

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register LoginAdvertisement