Any SU render engines that renders distorted textures?

-

@thomthom said:

where do the

pvariable come from?It is an index(starting from 1) of a point in a PolygonMesh. You get the Polygon mesh from a

face.mesh #.

You probably can get point position in other way. -

Also, why don't you use

uv = mesh.uv_at(p, true)? Appears it would give the same result. -

If you use my Probes plugin: http://forums.sketchucation.com/viewtopic.php?f=323&t=21472#p180592

Press

Tabto see raw UVQ data

By default it will useUVHelperto get the UV data

Press F2 to make it sample the data from thePolygonMeshFrom my testing, the data never differs.

UVHelperseem to be made to sample UV data from aFace.

But if you have aPolygonMeshof theFace, it includes the UV data (provided you asked for that when you usedface.mesh). -

@thomthom said:

Also, why don't you use

uv = mesh.uv_at(p, true)? Appears it would give the same result.I simply use UVHelper because it seems to be designed to this task. What would be the purpose of it otherwise?

I am not so sure if it would give same result for two distorted faces sharing same distorted texture (the last pair in the modified skp test scene).BTW. I have found that there is some memory leak when using UVHelper, at least those objects are not being dumped well (rubbish collector). In my exporter I stay away from UVHelper as long as I can. I read uv coordinates in all other scenarios using

uv_at. -

@thomthom said:

If you use my Probes plugin: http://forums.sketchucation.com/viewtopic.php?f=323&t=21472#p180592

Press

Tabto see raw UVQ data

By default it will useUVHelperto get the UV data

Press F2 to make it sample the data from thePolygonMeshFrom my testing, the data never differs.

UVHelperseem to be made to sample UV data from aFace.

But if you have aPolygonMeshof theFace, it includes the UV data (provided you asked for that when you usedface.mesh).Ok. Try this. Just load a face with distorted texture to the TW, then you will see the difference if you will use same TW to get uv from UVHelper.

What you have written is true as long as you use blank TW. It gives coordinates in relation to the original 'picture', but when you load a face to TW then it 'makes it unique inside TW' therefore uvs change.It looks like this thread would better fit in the new developers section

-

@unknownuser said:

I have never received false negatives by checking the 1.0 with enough precision.

Really? That's good to know. That's a much faster test.

As far as why some projected textures aren't distorted, I think Al and thomthom are both right, given the respective circumstance. It could be that the texture is not truly 'distorted' as Al suggest; it could be that the level of subdivision is high enough that you just don't see the distortion, like thomthom said.

And Tomasz hit it right on the head as far as using tw and uvhelper; you have to make sure you are using a tw 'loaded' with the face in question, before using it in the uvhelper.

-

@unknownuser said:

if you will use same TW to get uv from UVHelper.

Use a TextureWriter to get UV data?

-

@thomthom said:

@unknownuser said:

if you will use same TW to get uv from UVHelper.

Use a TextureWriter to get UV data?

It is the required parameter:

uvHelper=face.get_UVHelper(true, false, **tw**) -

Aaah!!! That's why it asks for a TW. Duh!

That fills in a great hole in the API doc.Thanks Tomaz!

-

Here's a simple test I did. (doesn't take into account nested groups etc)

but it's a rough ruby version of Make Unique.

-

Just a quick word as to what you're seeing.

The texture coordinates in SU are mostly 2d but there is support for projected textures in which the 3rd element of the texture coordinate is a projection. ie by dividing through by the 3 element you get a 2d texture coordinate.

You can get a ok approximation by doing the projection at the vertices and then interpolating across the polygon in 2d (this is what LightUp does), or you can interpolate the 3-element texture coordinate and do the divide at each pixel which is slower but you'll get the right answer. Not sure there are many renderers that do that.

Adam

-

Oh, and the corollary of this is that if you subdivide the faces into smaller pieces, you'll get a progressively more accurate rendering. So try cutting the distorted quad into 16 quads.

Adam

-

@adamb said:

You can get a ok approximation by doing the projection at the vertices and then interpolating across the polygon in 2d (this is what LightUp does), or you can interpolate the 3-element texture coordinate and do the divide at each pixel which is slower but you'll get the right answer. Not sure there are many renderers that do that.

SU is using OpenGL. Does it mean that SU, for its own 'rendering', does same interpolation and sends the 'unique' texture to OpenGL?

-

@unknownuser said:

SU is using OpenGL. Does it mean that SU, for its own 'rendering', does same interpolation and sends the 'unique' texture to OpenGL?

I suspect so - it is a lot faster to create the distorted image as a bitmap, and then send it to OpenGL - rather than starting to sub-divide faces.

Also, that would explain why SketchUp makes to pre-distorted image available to 3DS output and to us.

-

@unknownuser said:

@adamb said:

You can get a ok approximation by doing the projection at the vertices and then interpolating across the polygon in 2d (this is what LightUp does), or you can interpolate the 3-element texture coordinate and do the divide at each pixel which is slower but you'll get the right answer. Not sure there are many renderers that do that.

SU is using OpenGL. Does it mean that SU, for its own 'rendering', does same interpolation and sends the 'unique' texture to OpenGL?

No, it means SU sends 3-component texture coordinates to OpenGL and allows OpenGL to interpolate and divide at each pixel.

But as Al says, given you can 'bake in' your projection to a unique texture once your happy, its not a big deal.

Adam

-

@adamb said:

No, it means SU sends 3-component texture coordinates to OpenGL and allows OpenGL to interpolate and divide at each pixel.

Just as Adam says, OpenGL provides methods not just for 2 coordinates (UV), but 3 and even 4. glTexCoord3f, glTexCoord4f

-

I'm curious: for those render engines that renders distorted textures. What's the result if you add a bump map, like the one attached. Exaggerated bump so it's clearly visible.

-

@thomthom said:

I'm curious: for those render engines that renders distorted textures. What's the result if you add a bump map, like the one attached. Exaggerated bump so it's clearly visible.

"True" bump maps, where you assign the bump as a second texture, do not work, because SketchUp gives you a pre-distorted texture, which then works with UVs of 0,0, and 1,1, to use for the main texture. But SketchUp does not give you information on how to distort the second texture.

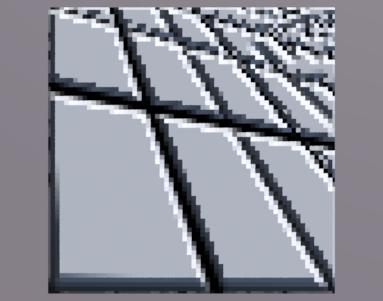

Here the carpet texture is distorted properly, but the bump map is not distorted.

"auto-bump" where a single texture is used for the color and for the bump map, does work, because the bump map is distorted as well.

Here the bump map effect matches the distortion of the image in SketchUp.

(The disgtorted SketchUp image was used as the bump map.)

Distorted SketchUp Material

This could be solved for multiple texture bump maps - but you would have to let SketchUp distort the main texture and then let SketchUp distort the bump map texture as well.

-

@al hart said:

"True" bump maps, where you assign the bump as a second texture, do not work, because SketchUp gives you a pre-distorted texture, which then works with UVs of 0,0, and 1,1, to use for the main texture. But SketchUp does not give you information on how to distort the second texture.

That's what I suspected. Which is a problem.

-

@thomthom said:

That's what I suspected. Which is a problem.

If you are an IRender nXt user, we can probably write a routine to distort the bump maps for you.

If not you can still do it in Ruby. Write a ruby to use texture writer to replace the diffuse SketchUp texture with the bbmp map texture, then use texture writer to save the distorted bump map, then replace te original texture. Ditto for any specular texture.

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register LoginAdvertisement